Close to Zero* Fee Policy

get2Clouds is committed to providing the tool needed for the rapid global adoption of Bitcoin.

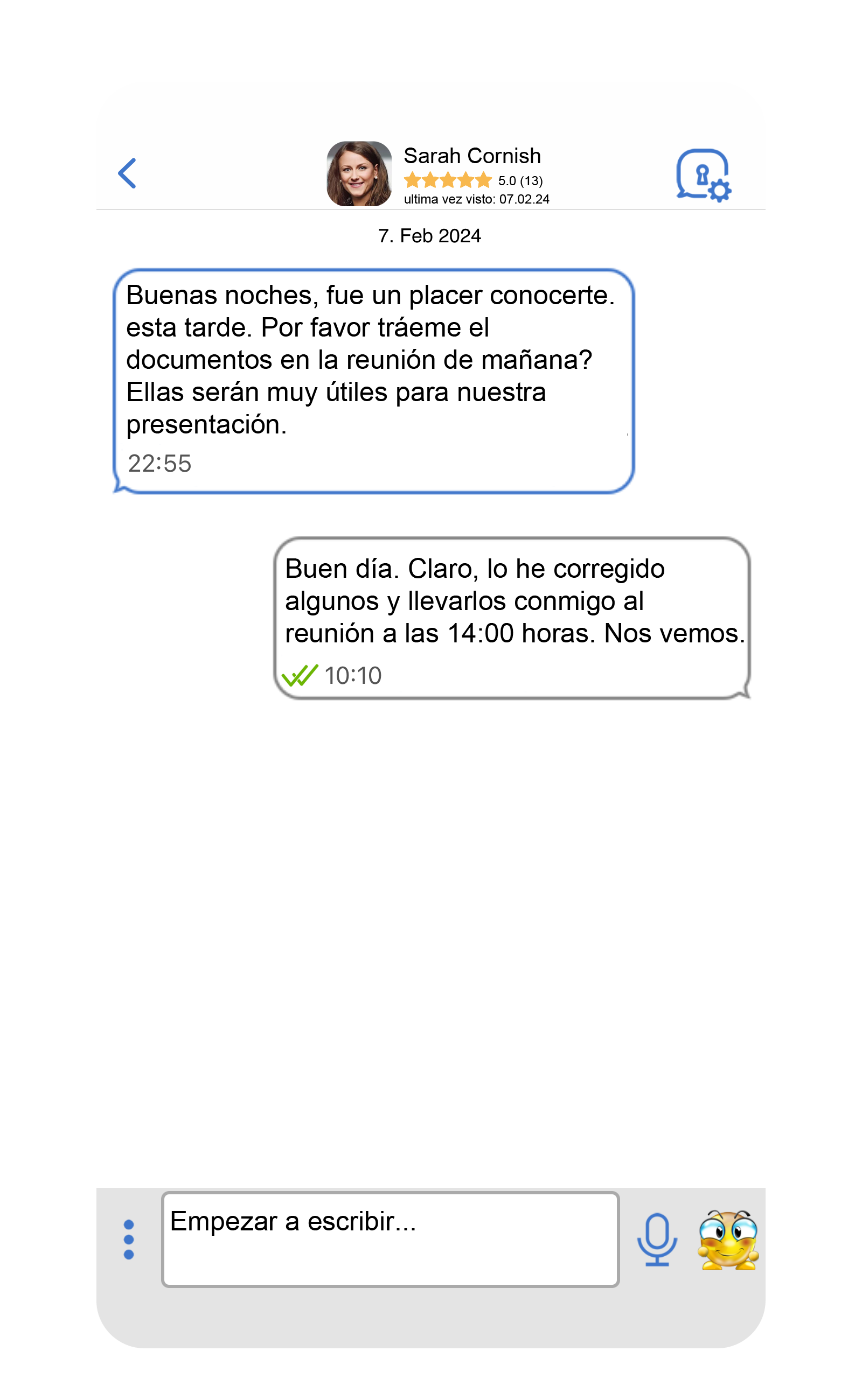

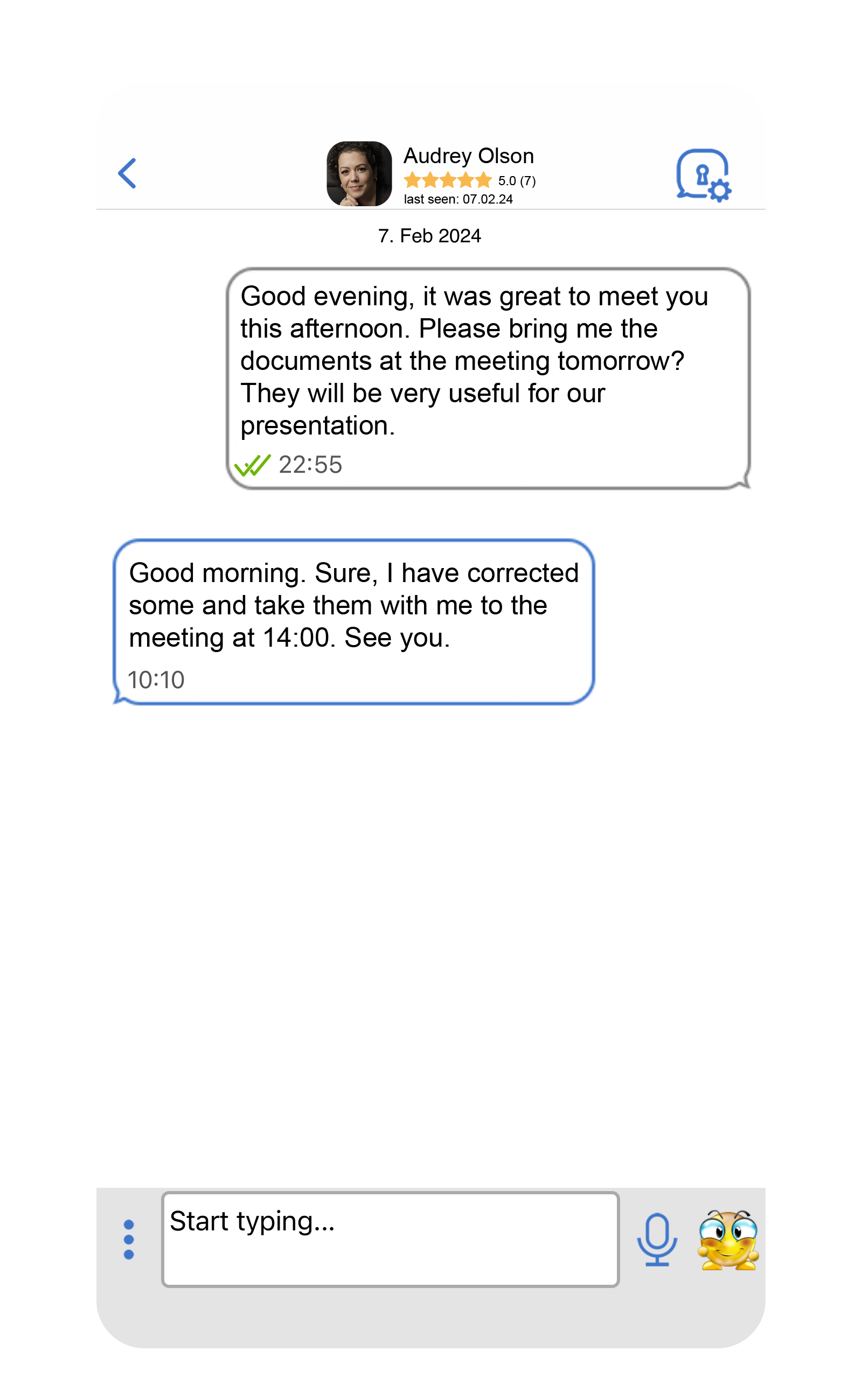

E2E encrypted Global Messaging:

Free

Global Calling:

Free

Global Video Chat:

Free

File Storage (2GB):

Free

Protected and fast large file transfer across all platforms:

Free

Bitcoin Transactions

Free & Instantaneous Online Wallet (Borderless) / Offline Cash-Wallet (Peer-to-Peer)

Complete (Data, Voice, Transactions) End-2-End Encryption:

Free & Always Enabled

No SIM card, no bank account, no credit card, no internet

- Fees for the encrypted privacy messenger for Global Calling, Messaging, Video-Chatting, Bitcoin-Payments: ZERO

- Fees for 2 GB of secure get2Clouds Cloud Space: ZERO

- Fees for incoming/outgoing transactions to Bitcoin Treasury: ZERO (of course, we can’t control Bitcoin’s native network fees)

- Fees for all Bitcoin Online Wallet transactions: close to ZERO*

- Fees for all Bitcoin Cash-Wallet transactions: close to ZERO*

- Fees for all Bitcoin Cash-Coin transactions: close to ZERO*

*close to ZERO means one per thousand. To put it concretely: When making a transfer within our app, 10 USD, i.e. 1 cent, is deducted from the transaction, or ten cents for a 100 USD transaction.